BLOG

Low Power Optimizations for a Bluetooth Connected Wearable

WRITTEN BY JUSTIN LAM, FORMER MECHATRONICS DESIGNER AT MISTYWEST

Bluetooth Low Energy (BLE) devices have seen exponential growth over the past 5 years, and companies are constantly adding greater functionality to stay connected (such as adding machine learning to low power devices). When it comes to battery-powered IoT devices like wearables, battery life will make-or-break your product for end-users. As devices become more complex, increasing in features, processing power, data rates, and range, the added functionality typically comes at a reduction in battery life.

In addition to a negative user experience, poor battery life also results in the need to have frequent charging, which impacts the overall battery lifetime health. Since lithium batteries have a limited number of charge cycles, frequent charging will lead to faster degradation and a reduction in total charge capacity.

Power consumption issues are typically a result of poor hardware and firmware design, and there are scenarios where time and/or budget do not provide an opportunity for another hardware revision to fix said issues. Fortunately, clever firmware design can often mitigate issues around excessive power consumption. In this article, we’ll discuss how we took a client’s hardware of a BLE wearable IoT device and improved the battery life from under 10 days to over 45 days through firmware optimizations alone.

A Primer on Bluetooth

Bluetooth was originally developed by Ericsson Mobile in 1989, with the original purpose of developing wireless headsets. This is known as Bluetooth 3.0, or Bluetooth Classic, and this specification had high power consumption and was not appropriate for battery powered sensors with intermittent, low data rate transmission. Eventually, we arrived at the birth of Bluetooth 4.0, or Bluetooth Low Energy (BLE), specifically for the low power IoT sensor industry.

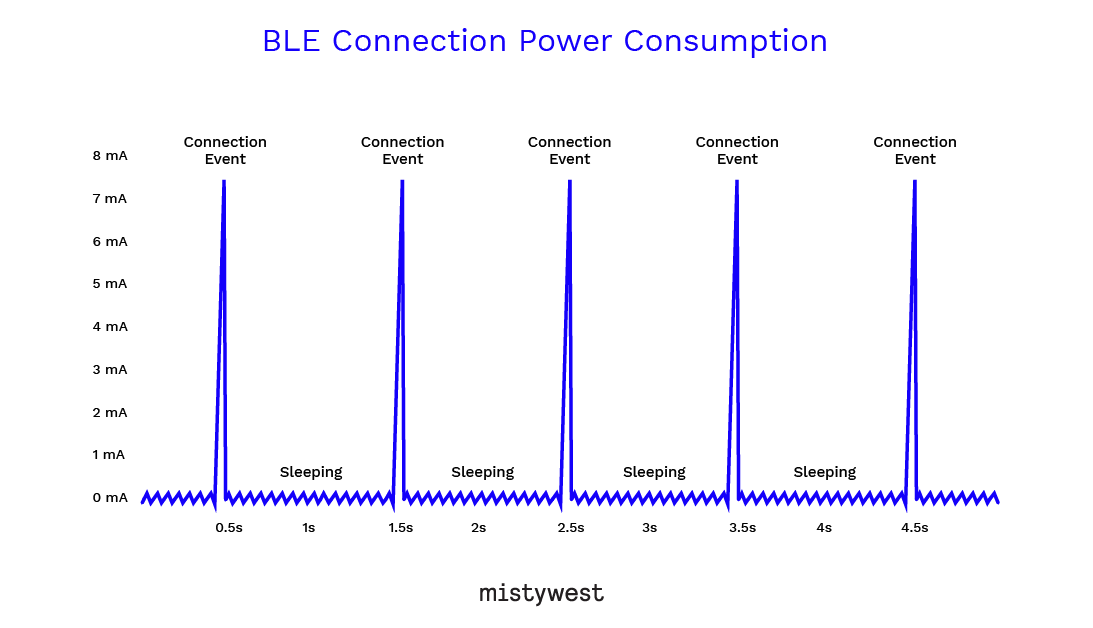

Bluetooth LE provides considerably lower power consumption and cost while maintaining a similar communication range. The protocol allows for the BLE Radio to sleep idle for the majority of the time between RF events, keeping power consumption in the uA range.

This allows for devices to operate on coin cell batteries with a lifetime of years. The maximum data through-put depends on which version of BLE as well as and the Connecting Host device. Practical Maximum Throughput:

- Bluetooth 4.0-4.1: 2.13 Kbytes/sec

- Bluetooth 4.2: 28.8 – 126.4 Kbytes/sec

- Bluetooth 5.0: 57.6 – 171.48 Kbytes/sec

BLE Radio

A Bluetooth LE radio can either be included as a single system on chip with an integrated microprocessor (such as the Nordic nRF52 series, a common component in many of our projects), or a standalone integrated circuit (IC) with an external host microprocessor. While the integrated option can save on board space and will have a smaller physical footprint, an external radio can be more power efficient since the microcontroller doesn’t need to be on for the radio peripheral to operate. The requirements of your specific application will determine which implementation is the most suitable.

BLE Advertising

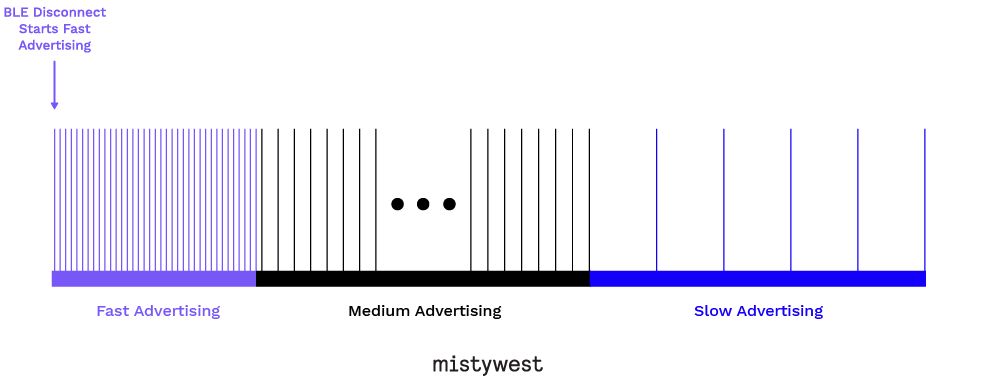

With any wireless protocol, if a device is frequently connecting and disconnecting, the more power it consumes. Specifically with Bluetooth, advertising (when disconnected) consumes significantly more power since it broadcasts across 3 radio-frequency channels. However, once a connection has been established, it drops down to 1 active channel and consumption is reduced. Bluetooth is a short range protocol, proximity is (or should) never guaranteed. Devices must be able to operate efficiently if not connected to a host device for hours or even days at a time.

In firmware, a state machine can be used to optimize the advertising intervals. By starting with “fast advertising”, we can ensure that if a host device is nearby, we can connect to it quickly. However, if one isn’t present for some time, we can reduce the advertising interval to a “medium advertising” mode. If one is still not present after minutes have elapsed, we can further reduce to a “slow advertising” mode since the probability of a nearby host is low. Typical current consumption values for each of the modes is below, where each sequential mode is a magnitude (or more) lower:

- Fast = ~0.48 mA

- Medium = ~0.078 mA

- Slow = ~18 µA

You can imagine that if the device is always in fast advertising but is disconnected for hours or days, the battery life will be significantly reduced compared to if different states were used. Data throughput can also be managed dynamically, where it is maximized only when data transmission is required, and reduced to a keep-alive pulse when no new data is present to simply maintain the connection.

Sensor Polling vs FIFO

On our client’s hardware, data was collected off an inertial measurement unit (IMU) to analyze and determine insights on the user’s behaviour. The original firmware used a software timer polling implementation, where the microcontroller would wake up, read the sensor for data, then go back to sleep. However, this is an inefficient method because there is power overhead in both waking up the microcontroller and reading a single measurement from the sensor over a fast SPI interface. The microcontroller consumes significantly more power at operation (~4 mA) compared to the sensor (< 5 µA), leading to a non-trivial amount of excess consumption.

An improvement to this is to use an interrupt-driven, first-in-first-out (FIFO) queue. In this implementation, the IMU continually stores samples in its internal FIFO, and when a watermark is reached, it wakes up the microcontroller and delivers all stored samples (configurable up to several hundred) at once. In comparison, the power hungry microcontroller can read and process hundreds of samples instead of reading one at a time, as done in the polling method. Writing to non-volatile memory like flash or EEPROM can also draw a significant amount of current, and reducing the frequency of this operation will only help increase battery life. Writing to SRAM consumes much less current than writing to non-volatile memory, and since the microcontroller is always powered, the volatile memory is not at the risk of losing data. By offloading the processing to the lowest power device, we were able to reduce the average consumption by ~3x.

Peripheral Management

When programming microcontrollers, it’s easy to fall into the habit of enabling peripherals (e.g. I2C, SPI, UART, USB, timers, etc.) without considering their lifetime or impact on battery life. To truly optimize battery life, careful control of peripherals and resources should be done to ensure they’re disabled when not in use. This is typically done by setting or clearing a bit in a power register. Using a real-time operating system like FreeRTOS can help simplify this management of resources, since tasks can be easily suspended and resumed while maintaining clean code and a clear separation of concerns between modules.

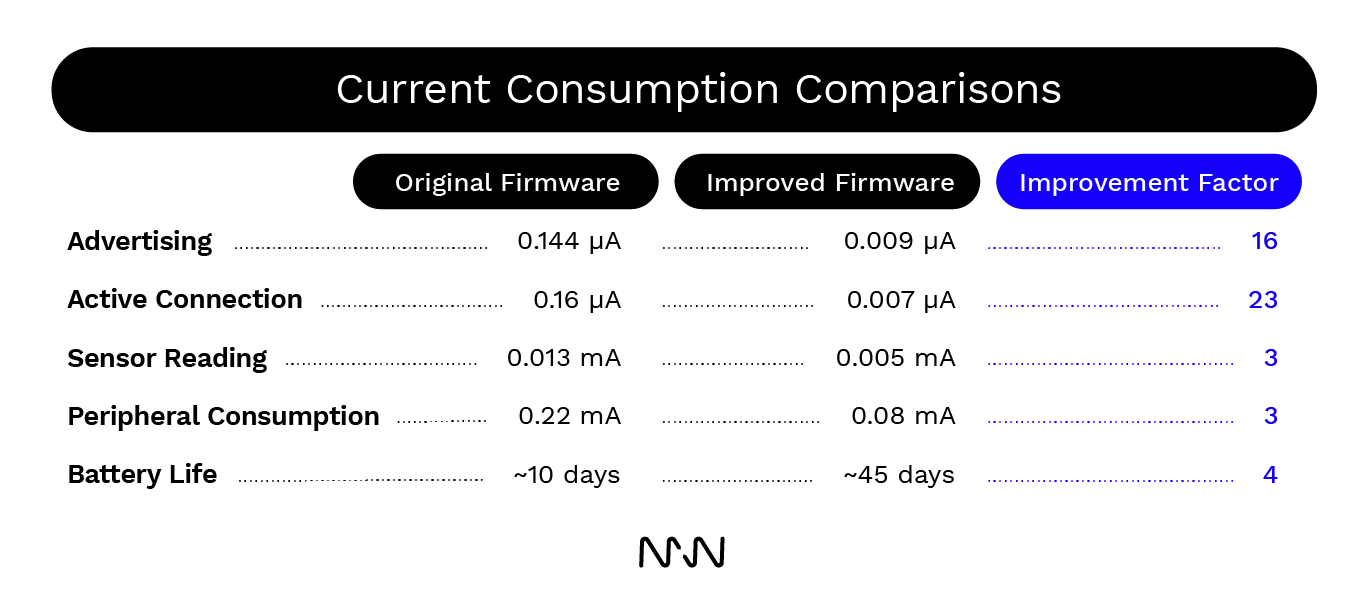

Comparison of Current Consumption

The table below provides a summary of the high level components and the resulting reduction in current consumption. In certain areas, we were able to reduce current consumption by a factor of 3, and others by orders of magnitude. Ultimately, we were able to increase battery life on a 100 mA lithium-ion battery from ~10 days to ~45 days.

Conclusion

Always-on, connected devices provide unique challenges to both hardware and firmware engineers. In hardware, a host of optimizations can be done including (but not limited to) timing accuracy, radio frequency optimization, antenna design, minimizing leakage current across components, and other fine-tuned power management. Other techniques not mentioned in this article involve taking advantage of the microcontroller’s power-saving features (ie. doze, idle, sleep, deep sleep modes), dynamic system clock configuration, setting the system voltage as low as possible, and caring for digital input/output pins left floating.

Wireless communication can often consume a large portion of the power budget. As shown in this article, proper design considerations can reduce BLE power requirements to only a small percentage of an application’s current consumption while maintaining reliable performance.