The term LiDAR stands for Light Detection and Ranging; technically speaking, LiDAR covers a broad range of sensors that use electromagnetic radiation in the visible, and infrared and UV bands to detect distances to objects, and its modern use refers to specific types of sensors that are referred to as LiDARs. At the very minimum, a LiDAR is a range of sensors capable of doing a line scan, which is achieved by performing spot distance measurements with a laser of a given wavelength and either rotating the entire unit or bouncing the laser off a rotating mirror.

Figure 1: Stanford self-driving car for that 2005 Darpa Grand Challenge. Source: Herox.com

In the early days of autonomous driving, these LiDAR units were used to scan the road ahead of a car and were mounted on the roof, typically at varying angles with respect to ground. As an example, in Figure 1, the Stanford self-driving car used for the 2005 Darpa Grand Challenge has 5 LiDARs mounted at different angles. The result gives the car 5 lines-scans in front of it at various distances, perpendicular to the cars motion. As the car moves forward, the data from the LiDARs is assembled to create a 3D map of the road and the obstacles ahead.

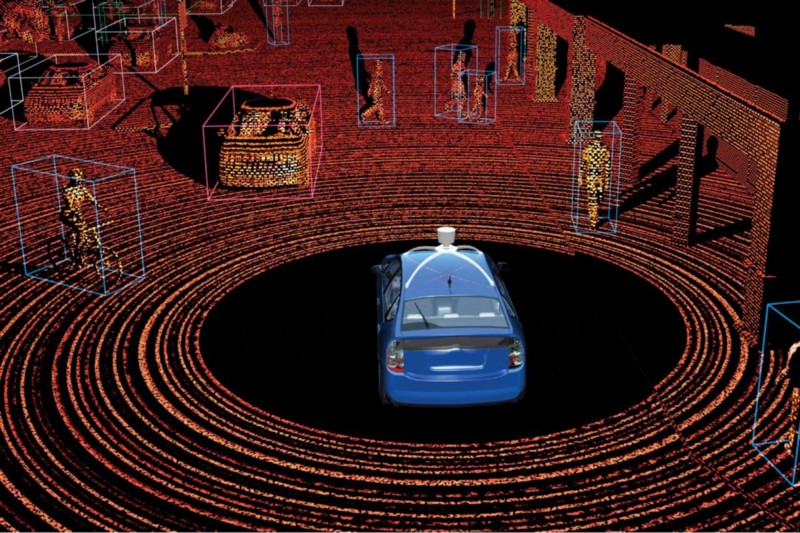

Figure 2: Velodyne LiDAR unit

Figure 3: Velodyne point cloud. Source: http://self-driving-future.com/the-eyes/velodyne/

Modern LiDARs operate similarly to the Stanford car, but instead of having multiple separate units, a single unit contains multiple laser-receiver pairs at various angles, as pictured in the Velodyne LiDAR unit in Figure 2. The resulting point cloud is shown in Figure 3, where each circle in the image comes from a single laser-receiver pair being rotated 360 degrees. By counting the number of circles, you’ll get an idea of how many laser-receiver pairs are in the one Velodyne LiDAR unit, and you can also see that the angle of each laser is determined as to keep the circles equidistant from each other while scanning a flat surface in front of the car.

Solid State LiDARs

A solid state LiDAR is any sensor range unit that can do 3D scans without having any moving parts, and this is considered a fundamental necessity if LiDARs are to become more robust and less expensive. Currently, there are various techniques to achieve solid state operation; one of them being the so-called flash LiDAR.

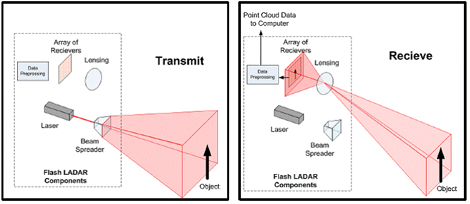

The idea of flash LiDAR is very simple: instead of having multiple laser-receiver pairs, you have one laser with multiple receivers arranged in a grid, similar to CMOS sensors in a digital camera. The laser is fired through a diffuser that turns the point coming out of a laser into a diffused flash of light, and as this light bounces off objects, some of it is reflected back to the flash LiDAR, and passes through a lens onto the receivers. The receivers measure the time it takes for light to hit them with respect to when the laser was fired, resulting in a depth image very similar to that of commercial depth cameras like the Kinect provide(d). However, flash LiDARs are designed to have a measurement range of 100 meters or more, meaning they carry a powerful laser, large and expensive optics, and are very sensitive optical receivers, resulting in a unit that is currently too expensive for use in autonomous driving.

Figure 4: Diagram of a flash lidar

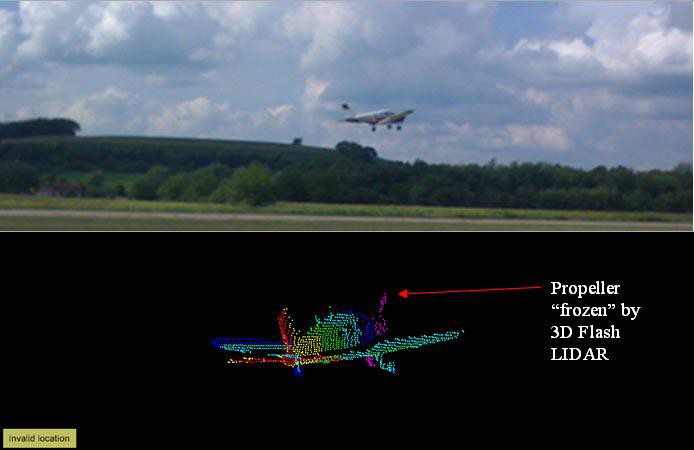

Figure 5: Flash Lidar Image.

The real advantage of flash LiDARs (other than their solid-state nature) is that they capture an instant 3D image of the world. In Figure 5, a 3D point cloud captures a plane taking off and a rotating propeller, yet the propeller can be captured without distortion. A conventional 3D LiDAR would have captured a rotating disk instead.

Semi-Solid State LiDARs

Semi-solid state LiDARs are the likeliest kind that will be used in production vehicles, and are similar to flash LiDARs but have a 1-dimensional line of receivers instead of a 2-dimensional grid of receivers . The laser of a semi solid state LiDAR hits a rotating mirror followed by a diffuser, that converts the laser dot into a laser line. The line moves back and forth, scanning the surroundings directly ahead and provides us with a 3D image of the scene. A single laser gives us an instantaneous set of data points that lie on a line, and the number of points is determined by the number receivers. A drawback to this design is it’s typically limited to a field of view of less than 180 degrees.

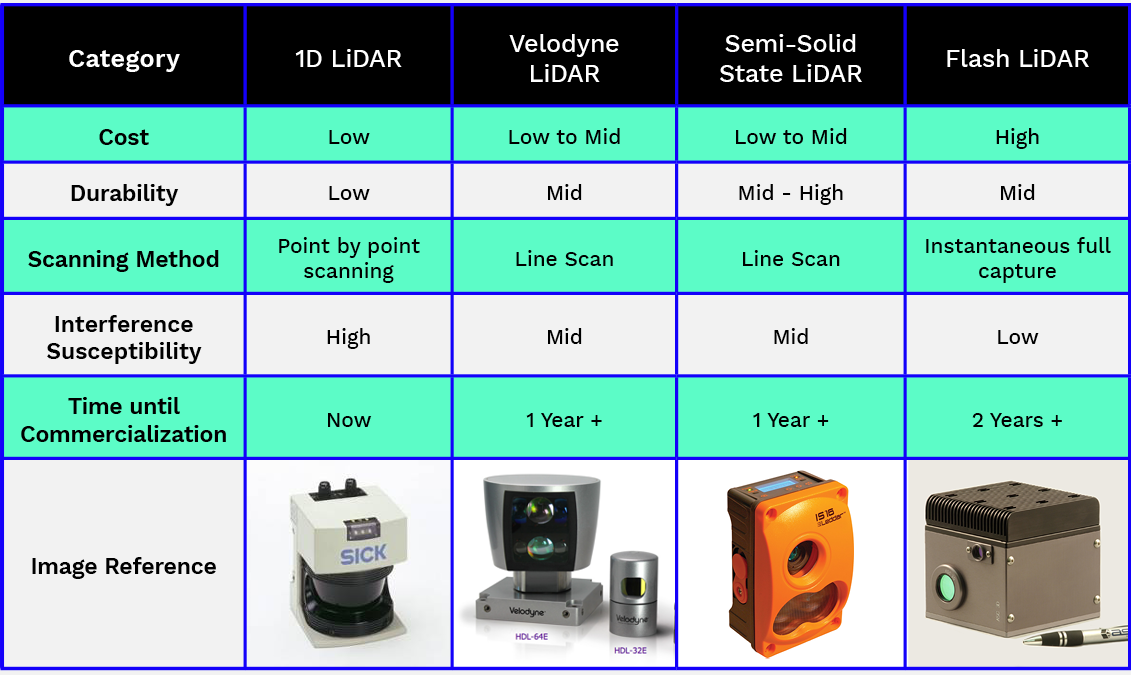

Figure 6: Comparisons of types of LiDAR

Is LiDAR the answer?

When it comes to autonomous vehicles, why use LiDARs over other sensors? This answer varies depending on who you ask. According to Elon Musk, you don’t use LiDAR at all. Instead, Tesla Motors plans to use an array of high dynamic range cameras made of cheaper hardware but requiring much more complicated algorithms processing power. The benefit to this being that Tesla doesn’t have to wait for LiDAR technology to mature, and instead sell vehicles that are ‘hardware ready’ for autonomous driving with continually updating software. Does this approach work? Well, considering that human motorists have two very high resolution, high dynamic range cameras as their primary sensors, it’s definitely possible. Tesla’s vehicles do not possess anywhere near the computing power a human brain has, but recent advances in neural networks and deep learning suggests that a powerful GPU can take to task what was previously thought only capable by humans.

But if you ask companies like Google or Uber, the answer is that LiDAR is the sensor to use. LiDAR out-of-the-box gives a full 3D map of the environment, and interpreting the point cloud, identifying vehicles, pedestrians, the road, and other obstacles is all that is required from it; a much simpler task than taking the 2D images provided by cameras and inferring 3D information from them. LiDAR is also self-illuminating, so–unlike cameras–it will work in all lighting conditions. All of the above points to LiDARs being a lower risk when it comes to autonomous vehicle programming, but the technology companies like Uber are currently using is not ready to enter the consumer market because of cost and robustness. LiDARs, as it stands, are also very expensive and can interfere with one another.

Even with all of their limitations, the measurement precision and scanning capabilities of LiDARs are unmatched, making them the future of the self-driving market. But there is still a lot of work that needs to be done to make LiDARs affordable for the mass market, durable enough to withstand variable conditions of weather, and most importantly, safe enough not to put human lives at risk.