A few weeks back, a handful of brave Westies began a quest to build a robot dog. When this concept was pitched, it was widely regarded as some animatronic dog covered in servos that would walk around – in reality, it’s a Machine Learning and Machine Vision platform.

The goals of the project are to identify and evaluate different real-time MV/ML algorithms for environment mapping and collision avoidance on a mobile, low power platform. When this thing is done, it’s going to look like $1500 worth of development boards and optics mounted to an RC car… so, what can go wrong?

We plan on using the following approach:

- Some flavour of SLAM for localization

- Monocular vision using a Point grey Camera

- NVIDIA TX1 for low power computing

- Battery powered

The Brains

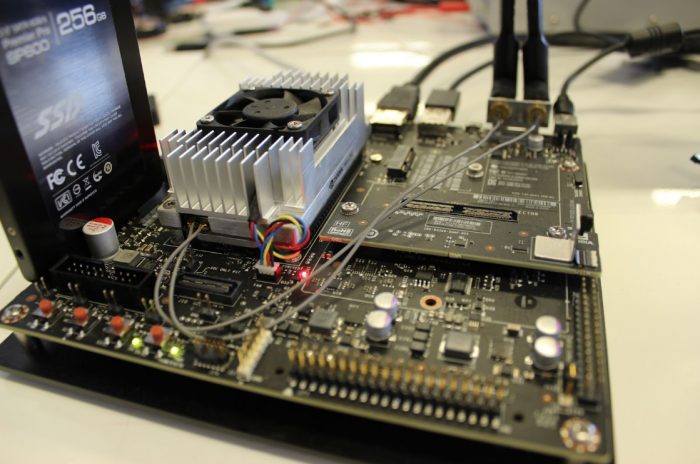

For the brains, we selected the Nvidia TX1:

This sucker runs a 64-bit ARM Cortex A57, 4GB ram, video encoder and decoder, support for 6 cameras, HDMI, USB, wifi, Gigabit ethernet, 3x USART, 3x SPI, 4x I2C, 4x I2S, 256 cuda cores and more pins than a 18th century seamstress. This guy is on another plain of computing existence when it comes to SBCs.

The board runs Ubuntu, which is easy to install. We then installed JetPack – a Nvidia machine learning SDK optimized for this rig. (remember the 256 Cuda Cores earlier)

For the full rundown, check this out.

The Eyes

Every MV system needs a camera, and for this project, Point Grey was the answer:

The camera we selected was the FMVU-03MTM-CS with a Fujinon YV2.8×2.8SA-2, 2.8mm-8mm, 1/3″, CS mount Lens. The camera runs at 752 x 480, 60FPS, Monochrome and most importantly – Global Shutter.

Traditionally, a rolling shutter camera will successively capture pixel data by scanning horizontally or vertically; this means that for each frame not all pixels are captured at the exact same point in time. This is typically noticed in pictures of helicopters when the rotors are running, they seem almost bent. Where for 99% of applications this okay, with machine vision you need a global shutter, which captures all pixels at the exact same point in time.

The Neck

If our camera is shaking all over the place, we’re not going to be able to make anything of the data. To solve this problem a hobbyist drone gimbal should do the trick, this can be controlled through some PWM lines to set out Yaw, Pitch and Roll so Robo Steve can look around.

The Central Nervous System

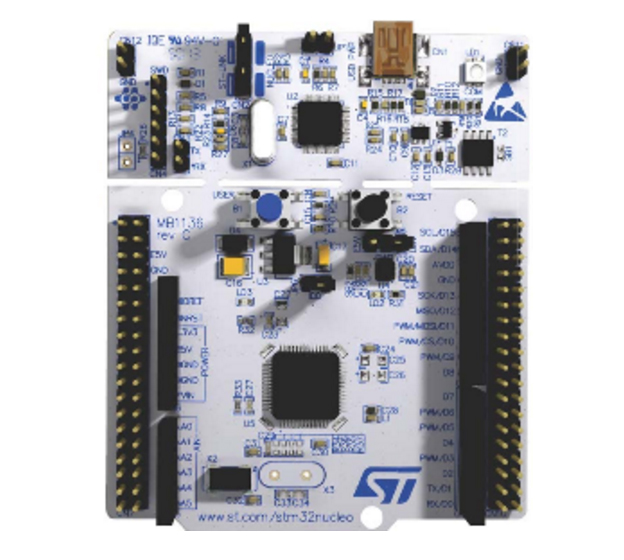

To control all the low level peripherals, such as the gimbal, motors, LEDs and whatever else RoboSteve will need in the future, I chose one of my all time favorite microcontroller the NUCLEO-F411RE, which is based off the STM32F411RE.

This beauty of this dev board it is has a ST-Link built in, which acts as a full Jtag debugger, virtual serial port and mass storage device. The USB will plug into the TX1, so i’ll be able to program, debug and communicate over serial all from the main board running OpenOCD, which i’ll be able to SSH into.

The Body

For the body, we picked up an RC car from Best Buy. Nothing too fancy, though.

Next Installment

Read part two of the RoboSteve series.